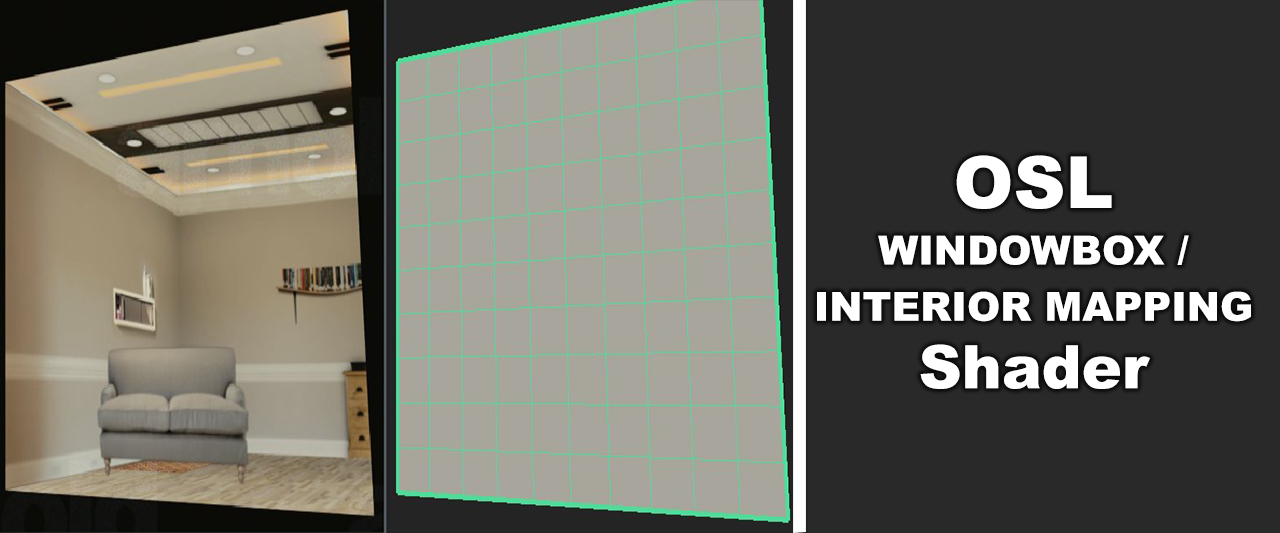

I remember one of our teachers in university telling us about how they rendered all the interiors of the buildings in Spiderman with just a simple plane with a shader attached to it that simulates the room interior with proper parallax. Since then this has always stuck in my mind and I saw it being used in some studios I worked at whenever there was a big city to be rendered. I finally found the time (.. too much time :) ) to do this sort of shader myself in OSL!